CAST: Achieving Stable LLM-based Text Analysis for Data Analytics

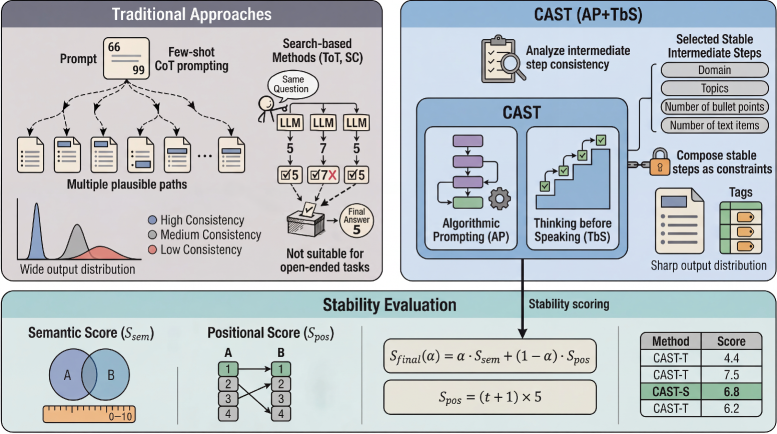

To address this challenge, we introduce CAST (Consistency via Algorithmic Prompting and Stable Thinking), a framework that enhances output stability by constraining the model's latent reasoning path. CAST combines (i) Algorithmic Prompting to impose a procedural scaffold over valid reasoning transitions and (ii) Thinking-before-Speaking to enforce explicit intermediate commitments before final generation.

To measure progress, we introduce CAST-S and CAST-T, stability metrics for bulleted summarization and tagging, and validate their alignment with human judgments. Experiments across publicly available benchmarks on multiple LLM backbones show that CAST consistently achieves the best stability among all baselines, improving Stability Score by up to 16.2%, while maintaining or improving output quality.

Key Contributions

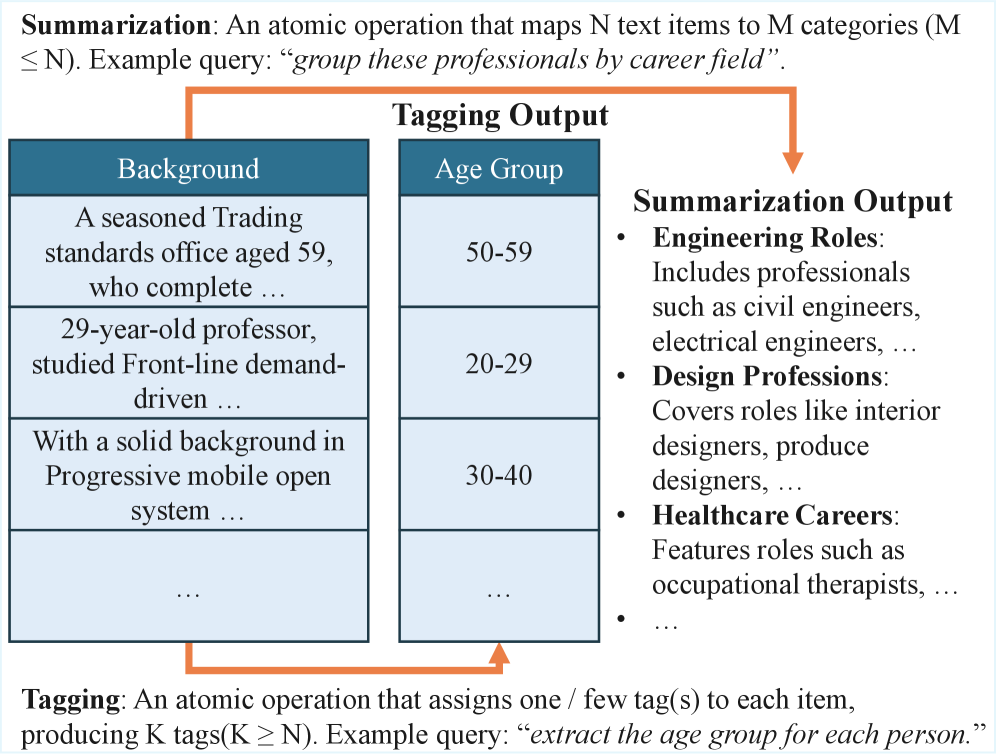

- Formalization of TADA: We formalize Text Analysis for Data Analysis (TADA) as a tabular-centric paradigm, highlighting stability as a functional necessity for integrating probabilistic LLM outputs into deterministic OLAP workflows.

- CAST Framework: A novel approach that constrains generation via Algorithmic Prompting and intermediate commitments, reducing the entropy of latent paths without expensive search-based methods.

- Stability Metrics: We introduce CAST-S and CAST-T, stability-focused evaluation metrics combining semantic matching with order sensitivity (Kendall's Tau) to capture human-perceived consistency.

- Strong Empirical Results: Up to 16.2% improvement in Stability Score across multiple LLM backbones, with no regression in accuracy.

Method

CAST addresses the instability problem by constraining the LLM's latent reasoning trajectory through two complementary mechanisms:

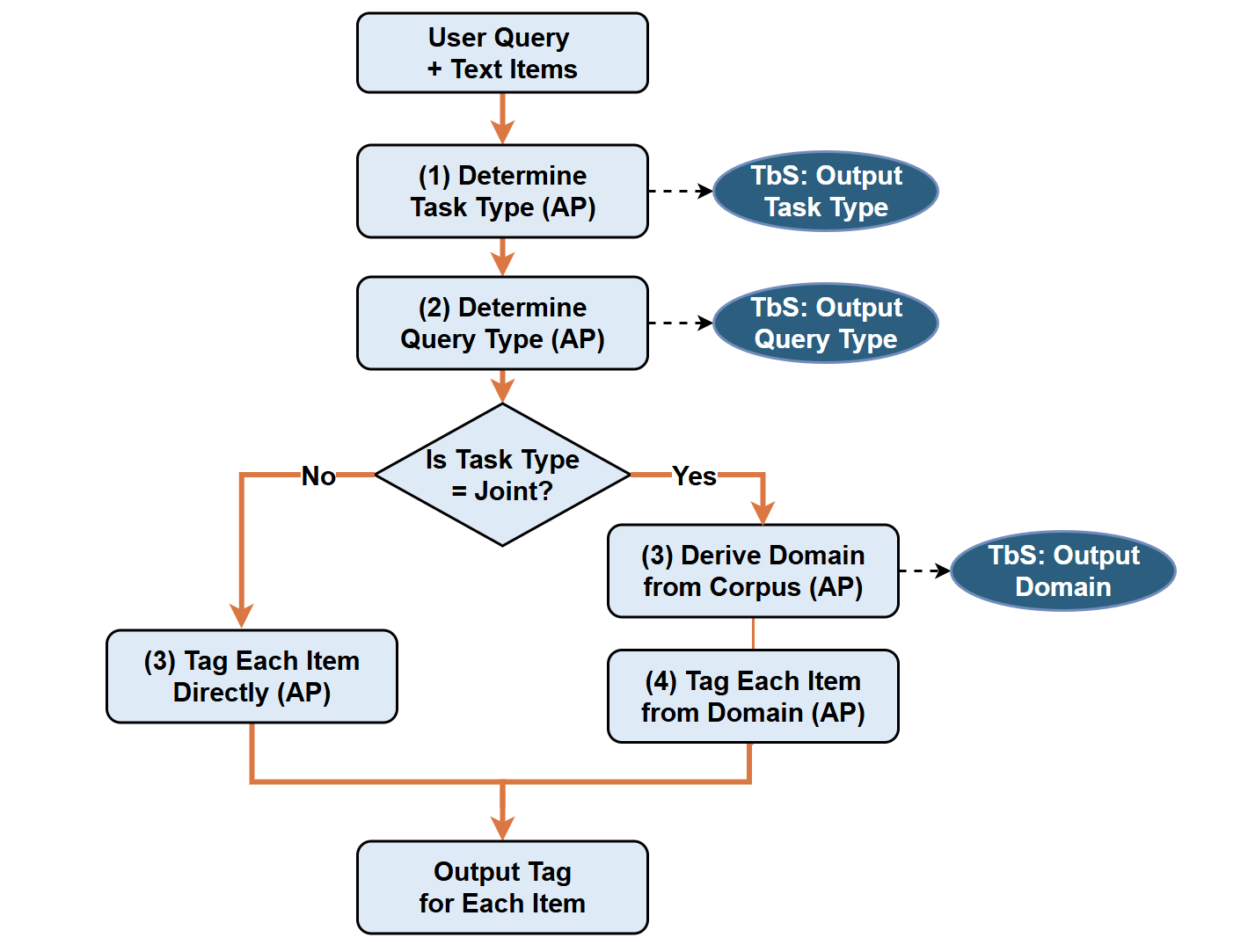

Algorithmic Prompting

Specifies an algorithmic scaffold for the task, translating classic deterministic workflows into a structured prompt sequence. This scaffold acts as a strong prior over valid reasoning transitions, effectively pruning high-entropy paths.

Thinking-before-Speaking

Enforces the scaffold by requiring the model to produce well-defined intermediate states (domain, topic schema, clusters) before emitting the final output. By committing to these states, the model follows a more stable reasoning path.

Empirical Observation

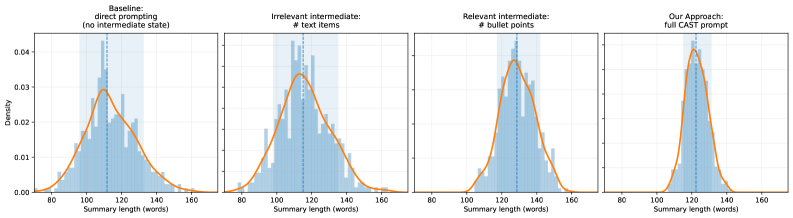

We empirically demonstrate that requiring relevant intermediate states demonstrably sharpens the model's output distribution. As shown below, CAST produces the sharpest and most concentrated distribution, indicating substantially improved run-to-run stability.

Results

Citation

Acknowledgments: This work was done during the author's internship at Microsoft Research. We thank all colleagues and mentors from the DKI group for their support and valuable feedback.